Over the past year alone, AI tools that once surfaced insights and handed them to a human for action have begun carrying out those actions themselves. Sixty-two percent of organizations are now experimenting with AI agents, according to McKinsey’s latest State of AI global survey, and many are deploying agents into production without effective governance in place.

The level-up in speed and efficiency offers a massive competitive advantage. It also comes with risk. The moment an AI agent places a purchase order or modifies a budget forecast, the organization has crossed a threshold. AI is no longer an analytical aid; it’s running critical operations. Any mistakes or hallucinations introduce risk to the company.

For CDAOs and governance leaders, this is the moment when the discussion moves to the boardroom. Prior governance and oversight models were developed based on human decision-making, and data inputs drove the decision process. People were the ultimate filter. Agents have removed that filter. That’s why agentic AI governance is a core infrastructure issue that organizations need to address today.

As AI agents’ role extends beyond creating business insight to driving real business decisions, governance can no longer stay passive. AI governance needs to become an active control infrastructure that is embedded throughout each level of the system in which the agents operate.

What Is AI Agent Governance?

In simple terms, agentic AI governance comprises the rules, regulations, and technology that ensure self-determining AI agents operate within predetermined bounds, provide accurate outputs, and can be traced throughout their operational lifecycle.

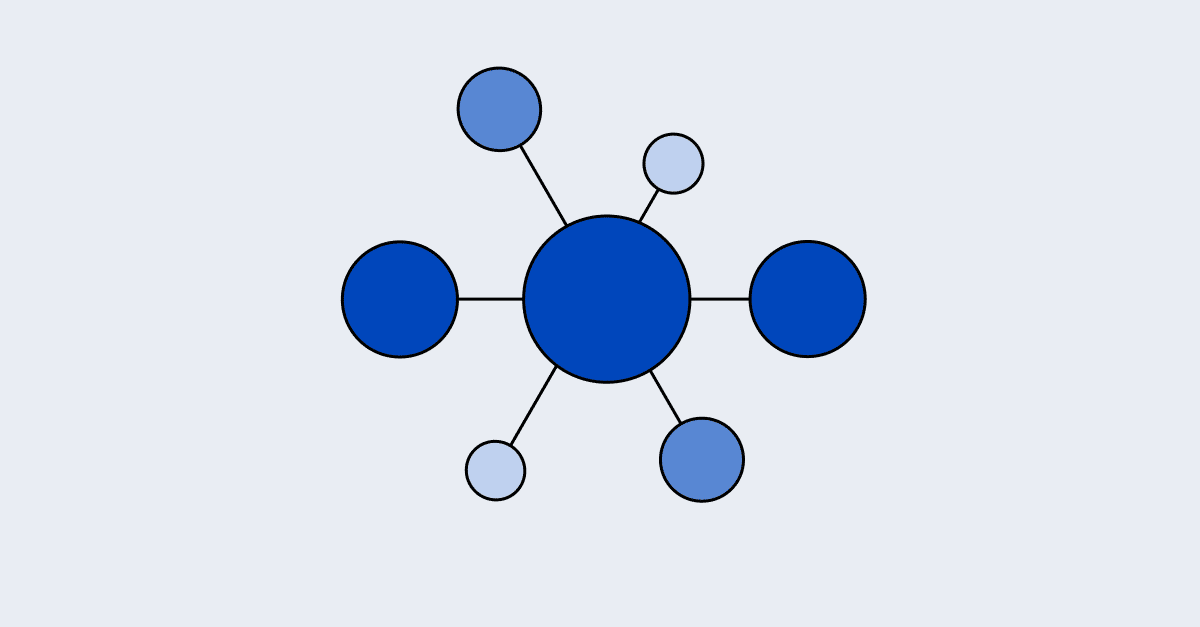

A comprehensive AI agent governance model includes six major components:

- Data governance: The guardrails and limitations that determine what data the agents have access to and under what circumstances.

- Metric governance: The appropriately defined metrics (e.g., for revenue, risk, and/or performance) that the agents use as their single source of truth.

- Access control: The specific roles within an organization that have authorization to invoke an agent and under what permissions they may do so.

- Runtime oversight: The capacity to oversee, stop, or intervene in the agent’s actions while it’s in operation.

- Explainability: The capability to trace agent decisions and assess their validity based on the target input and logic.

- Accountability: Identifies the parties or groups within an organization who are accountable for the consequences of an agent’s actions.

In the sprint to leverage agentic AI in the modern business world, many organizations prioritize speed to market over establishing governance boundaries and ensuring the right guardrails are in place. While 69% of organizations are deploying AI agents, only 23% have a formal agent governance strategy. Contrary to assumption, these governance protocols seldom slow innovation. They create the conditions for autonomy to safely scale.

Why Governance Is Now an Urgent Matter

AI governance has long been seen as a best practice, but it’s not exactly the most exciting topic for discussion in the boardroom. That’s quickly changing as the level of autonomy grows, with agents evolving beyond simply generating insights and giving advice to taking multi-step actions on behalf of humans.

“You can think of AI as being a catalyst. So it allows you to do things faster — and that’s good, but it can also be bad. It can allow you to do subpar work faster,” warns Jepson Taylor, Founder and CEO at VEOX. The risks are front and center, solidified by several compelling truths.

Agents Don’t Just Recommend, They Act

You don’t have to look very far in the past to see previous AI iterations that solely made suggestions and answered common queries and prompts. Today’s agentic AI systems can carry out a wide range of functions, like initiating procurement workflows, raising service tickets, modifying pricing logic, or adjusting operational parameters without anyone else being involved. While incredibly valuable to organizational processes, the risk of mistakes compounds, with serious consequences.

Scale Increases Risk

BI environments adopting autonomous AI agents need to keep governance and semantic consistency as a foundation. Previously, a data analyst could spot an incorrectly calculated metric on the dashboard. An agent is more prone to making this mistake, which can spread through many connected workflows, downstream systems, and automated decisions without anyone noticing.

Faster Decision-Making

Autonomy-driven systems reduce review cycles by design. That’s the value proposition for autonomous AI agents. But executives need to be aware that the tradeoff for decision-making speed is the risk of systemic errors. Governance must be built into the infrastructure itself, not added on after the fact, when decisions are made faster than people can check them in real time.

Regulatory Pressure Is Building

Compliance leaders are navigating more than a checklist. Regulators want to know what AI agents did, why they did it, and how to trace the decision back through the system. That expectation is reshaping how organizations think about transparency, accountability, and risk. Organizations that are slow to adapt to agentic AI governance standards today will likely find these regulatory demands a stark necessity tomorrow.

The Risks of Unmanaged Agent Behavior

Autonomy accelerates existing risks. When agents operate without governance guardrails, common data discrepancies become operational problems at a speed and scale that traditional controls were never meant to handle.

- Metric drift at scale: When agents ask for different definitions of the same business metric, they make different decisions in different systems. This is where AI loses credibility for CDAOs: not because of one mistake, but because of a pattern of outputs that can’t be explained.

- Uncontrolled workflow execution: Agents without proper limitations can trigger downstream actions that are difficult, if not impossible, to undo. A poorly scoped agent in a procurement or finance workflow can cause real problems for the business before anyone has a chance to step in.

- Lack of explainability: The organization can’t defend its choices if an agent can’t explain why it made them. For leaders in governance, this is a direct risk to the audit. Regulators and internal risk teams are putting more pressure on companies to have a clear, traceable chain from data input to agent action.

- Violations of access control: Agents with too many permissions can access data that they shouldn’t be able to. This is where fragmented access logic becomes a problem for architects: permissions made for people don’t always work for autonomous systems.

- Shadow AI expansion: Departments are using autonomous agents without central control, often doing so quickly and with good intentions. As a result, many agents work with different data, definitions, and assumptions, and none of these are visible to the teams responsible for enterprise risk.

- Semantic inconsistency across agent chains: In multi-agent architectures, where one agent passes context to another, inconsistent business logic compounds at every step. What starts as a small difference in definitions for one agent becomes a chain of outputs that don’t align by the end.

What Enterprise AI Agent Governance Requires

Depending on where you are in the organization, governance looks different. But the basic need is the same for every role: structured control that grows with freedom.

Executives

Accountability is the first step in executive-level governance. When an agent does something, someone needs to be responsible for the results, and the organization needs clear ways to deal with agents who do unacceptable things. Before deployment, these structures should be set up. Aligning agent autonomy with the organization’s overall risk appetite is a vital leadership decision with top-down implications.

CDAOs

Centralizing metric definitions is the most important thing a CDAO can do to improve governance. When agents in different systems use different calculations for the same business idea, the results can’t be trusted, and the damage keeps getting worse without anyone noticing. Governance must be based on architecture, not on rules. The data foundation needs to have semantic consistency built in.

Governance and Compliance Teams

When agents are working on their own, runtime monitoring is no longer optional. Compliance teams need logged decision trails that show what an agent did, what data it looked at, and which logic it used. Policy enforcement can’t depend on human review cycles that are slower than the agents themselves. The governance infrastructure must be able to flag, limit, or stop agent behavior instantaneously.

Data Architects

Architects need to extend business logic beyond individual apps and integrate it into a central, controlled semantic layer. When metric definitions are in agent code or prompt templates, they can’t be seen or checked. Role-based access controls must explicitly encompass agent identities, and KPI definitions should be subject to version control, adhering to the same rigor as production software. At human speed, fragmented logic is easy to handle. It becomes structurally dangerous when operated by autonomous AI.

AI Leaders

Agents shouldn’t always have the same amount of freedom to act in all situations. AI leaders need to adjust the level of autonomy based on workflow sensitivity and system maturity. Organizations can gradually increase their agents’ capabilities with confidence when they have clear system boundaries and defined scopes of action.

Data Governance vs. AI Agent Governance

Data governance was built on the idea that people made decisions and data was the input. It focused on data quality, access permissions, and lineage to ensure that the right people could access accurate, traceable information.

AI agent governance includes all of that and goes even further. When agents act on their own, governance must also deal with decision traceability, runtime monitoring, semantic consistency, autonomy constraints, and accountability mapping.

The difference is important because the stakes have changed. Misleading reports come from poor data governance. Negligent actions are taken when agent governance isn’t managed. One is a problem with visibility. The other is about how things work.

AI agent governance does not replace conventional data governance. It broadens it to include operational autonomy.

Why Semantic Governance Is Central to AI Agent Control

AI agents don’t understand metrics the same way people do. They programmatically process definitions, so inconsistencies are not caught in context. It gets operationalized.

When different agents use different definitions of the same KPI, decisions don’t match up across systems. Recommendations differ. And when something goes wrong, it’s not easy to find the source.

“We’ve lived in this data-first world, and we’ve treated metadata and semantics as second-class citizens. But AI is forcing us to say that this stuff is the foundation,” says Juan Sequeda, Principal Researcher at ServiceNow, in an AtScale podcast.

Version-controlled metric definitions enable governance teams to analyze agent behavior. Centralized KPI logic prevents metric drift from quietly accumulating across agent outputs for analytics leaders. Semantic abstraction at the model level lowers the chance that different applications will have business logic that doesn’t match.

This is where the semantic layer becomes governance infrastructure rather than just a tool for analytics. Platforms like AtScale bring together and manage metric definitions across BI and AI systems, creating a unified, consistent semantic foundation that agents can always rely on.

Deterministic metric logic is the first step in AI agent governance. Everything downstream is unstable without it.

How Enterprises Are Beginning to Govern AI Agents

Although it’s the early stages of agentic AI governance, there are several areas where consistent patterns are developing. Companies are realizing the critical nature of keeping agents in check, and they are establishing frameworks and internal resources to lay the necessary groundwork, such as:

- AI governance councils: These multifaceted, cross-functional teams are being established within the organization to collaborate on producing guidelines and determining which behaviors are allowable for agents.

- Centralized AI oversight teams: With a more granular focus, dedicated teams are being allocated toward monitoring the activity of agents, addressing incidents, and auditing their performance.

- Formalized semantic layers: Organizations are assigning standardized definitions for metrics and terms agents draw from into centralized models that serve as the official context layer for all agent interactions.

- Policy-driven AI orchestration: There’s a big push to ensure policies are enforced before and during the agents’ actions, rather than remedial fixes after the fact. In doing so, organizations are proactively implementing clearly-defined business rules and limitations into orchestration frameworks for agents.

- Risk review boards: Cross-functional leadership teams involving legal, compliance, data, and technology come together to collaborate on agent risk reviews before widespread deployment of autonomous systems.

This is a signal that CDAOs should pay attention to. Governance maturity is becoming a competitive edge. Organizations that can deploy agents with confidence because their definitions are clear and their controls are verifiable will move faster than those still working out the fundamentals.

AI Autonomy Requires Architecture Moving Forward

As AI agents take on a greater role within enterprise systems, the governance model must evolve at the same pace. Once AI agents make decisions faster than review cycles can keep pace, reactive oversight will quickly become obsolete. The governance model must be embedded within the system’s architecture.

For example, semantic definitions must be centralized, automated oversight must be built into the system, and accountability must be defined before deployment (not after an incident).

The companies best positioned to scale agentic AI won’t necessarily have the most sophisticated models; they’ll have the strongest foundations. These are the conditions necessary to support sustained innovation. After all, autonomy without architecture is simply speed without direction.

When evaluating your company’s readiness to scale AI agents, the structural questions are most important. Are all of the systems that an agent could interact with using the same definitions for your metrics? Is your business logic stored in one location and up to date?

AtScale provides businesses with a reliable source of truth for their analytics and AI agents. A centralized semantic layer is not optional for organizations serious about implementing governance over agentic AI. Rather, it should be considered foundational infrastructure.

Ultimately, scaling AI agents successfully isn’t about moving faster; it’s about building on a governed, unified foundation where every decision is grounded in consistent, trusted context. If you’re ready to put that foundation in place, connect with our team to get started.

SHARE

Guide: How to Choose a Semantic Layer